The Exponential AI Evolution Framework

The SaaSacre: How Xemes, Spans, and Friction Triggered a $1 Trillion Repricing-A Forensic Analysis of the New Winners and Losers

TL:DR

We are moving into a world where AI doesn’t just assist-it operates.

Think of it like teaching a child to ride a bike. At the start, you need training wheels. You hold the saddle white-knuckled while the child tentatively starts pedaling. There are wobbles and a few falls. Soon, they are moving away while you chase protectively after. Before you know it, they are riding by themselves. Eventually, they are the driver of the journey, and you have become the passenger. You stop thinking about the mechanics; you are just along for the ride.

This is the trajectory of the human and the AI. We are the parent; the AI is the child. The bike is the work that needs to be done. Letting go of the bike happens the moment you stop deciding and the AI starts acting.

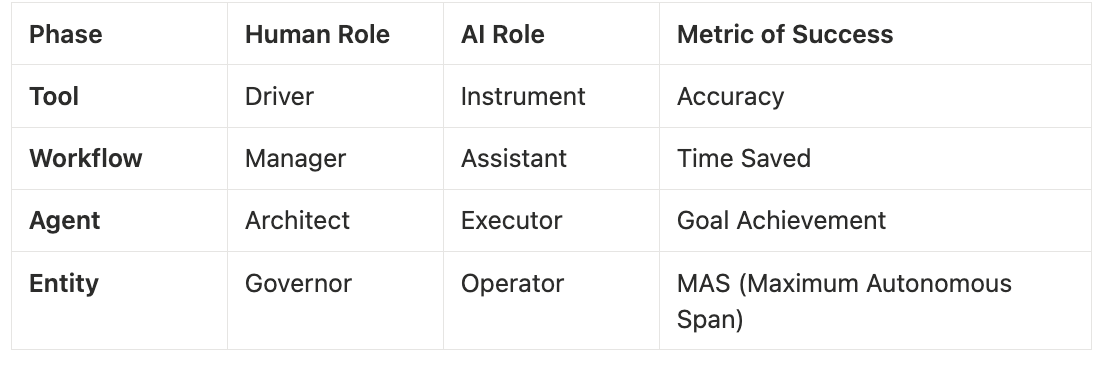

There is a sequential ladder here:

Tool: AI is a draft or a sort that stops when you close the tab.

Workflow: It moves through processes while you watch.

Agent: You define “good” and the machine finds the path.

Entity: A system that owns the outcome, operates across time, and manages itself inside boundaries you set.

System Shift: A reimagining of the entire system

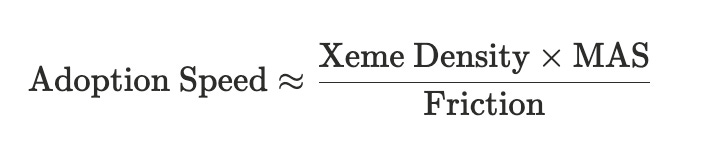

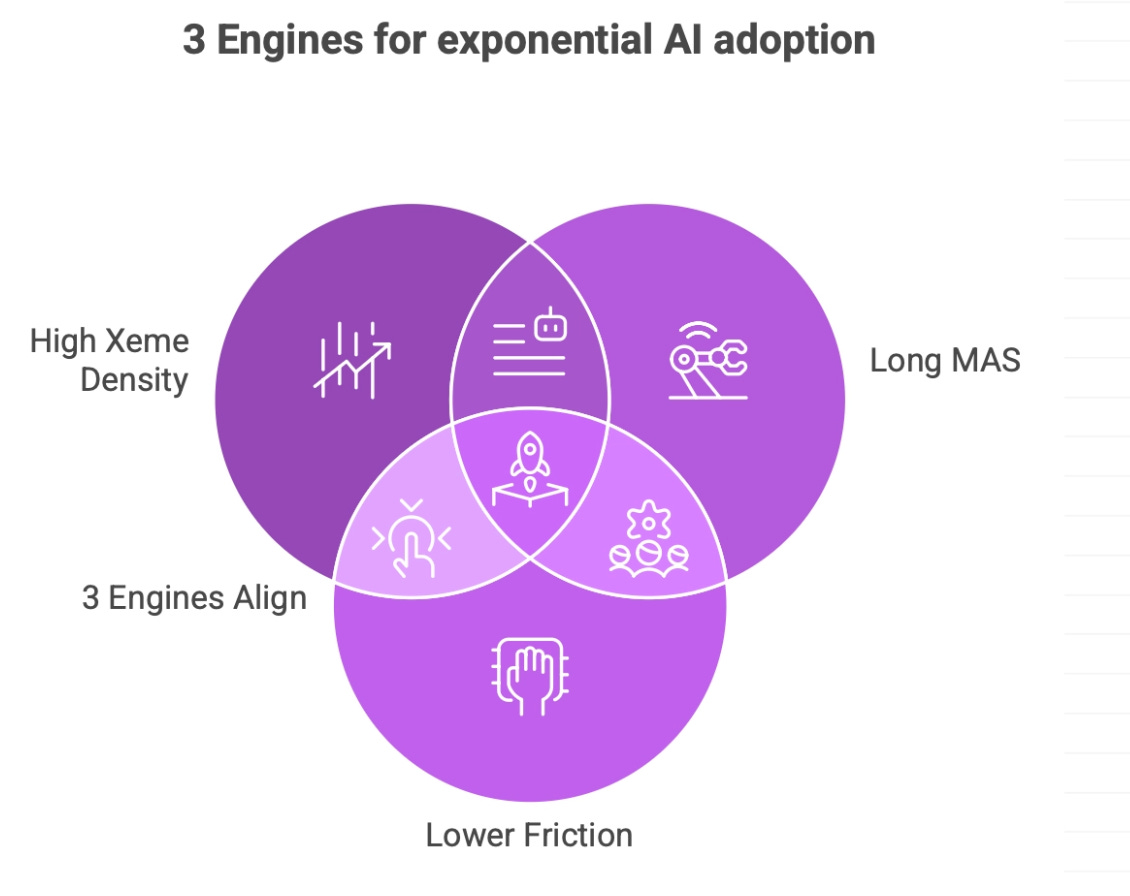

The speed of this climb is driven by three engines:

Xeme Density (sticky lessons),

Maximum Autonomous Span (time without human rescue), and

Friction (the resistance of the real world).

Because of the Jevons Paradox, as work gets cheaper, we won’t do less of it; we will flood the world with it. Volume will explode. While fewer humans are needed for the “doing,” the total activity increases so much that humans are pulled further into the flow-supervising systems that were intended to be mere tools. This isn’t about human irrelevance; it’s about who sets the objective and who is caught inside the flow.

1. The Shift

This transition has nothing to do with AI getting “smarter” in the traditional sense. Benchmarks are not useless-they are essential for tracking the raw capability of a model-but as a predictor of industry change, they are often a distraction. A model can pass a bar exam and still be incapable of running a business process. The real metric of change is Outcome Ownership.

When AI is a tool, you hold the thread. If you stop, the work stops. You are the heartbeat of the process, providing every spark of initiative. But when AI moves into workflows, it carries a short chain of logic-scheduling, checking rules, routing data. You are still responsible for the result, but you are no longer touching every link. You are moving from a “doer” to a supervisor of a process.

Then comes the agent, which carries intent. You describe the destination, and the AI navigates the terrain. But the “Entity” is a different species. An entity carries the outcome itself. It lives across time. It has memory, motive, and reach. It doesn’t wait for a prompt; it acts inside constraints you defined weeks ago. [For more, see The Entity Framework]

The differentiator is the time horizon. A tool lives in a flickering moment of interaction. An entity lives across days and weeks. If a system can hold a process across time, it begins to shape the result long before you see it. This is why Maximum Autonomous Span (MAS) is the best yardstick you can actually observe. It isn’t the only engine, but it tells you exactly where the seat of power is located.

How long can the system run before a human has to step in and rescue it?

Five minutes is a demo. An hour is assistance. But a day or a week of autonomy changes the structural reality of a company. When MAS is short, you are the driver. When it stretches, the system becomes the operator and the human becomes the exception-the person called in only when the machine hits a wall.

This is the bend in the curve. It isn’t driven by raw IQ; it’s driven by the length of the leash. When a system can hold an entire sequence from start to finish - Find, Compare, Decide, Execute, Measure, Adjust - the work reorganizes itself. The process just stops needing so many hands.

Now add Xeme Density. A xeme is a lesson that sticks [See the Xeme Framework]. In a system with high xeme density, every improvement is locked in. It doesn’t repeat yesterday’s mistake. High xeme density pushes capability up, while high MAS lets that capability run. When they align, ownership shifts upward while volume expands outward. The steering wheel might still be there, but it’s no longer connected to the tires.

To see where you sit in this shift, don’t ask if the AI can do your task. Ask if it can run the whole process from start to finish without you.

Summary of the “Shift”

2. The Curve Is Not Magic:

People draw S-curves because they look dramatic, but the curve itself explains nothing. The inflection point is not a mystical event; it is a mechanical transition. In our context, the curve starts bending faster the moment responsibility moves from a human hand to a digital system.

The vertical axis of this curve isn’t “intelligence” - it is Outcome Ownership. At the bottom, you own the result completely. At the top, the system carries the outcome. To visualize this transition, we look at the ladder of ownership:

Tools: AI handles a single task (writing a draft, sorting data). The work stops the moment you close the tab.

Workflows: AI carries a process. It moves data from A to B while you supervise the flow.

Agents: You define the objective. The machine navigates the terrain, presenting you with a finished result to approve.

Entities: The system owns the outcome itself. It operates across days, manages its own memory, and acts within constraints you set weeks ago.

System Shift: The old system isn’t needed anymore - the AI has created a new system rendering the old way of thinking about the problem irrelevant.

At the lower rungs, you are the bridge providing the initiative between steps. The gains feel incremental because you are still the owner. But as the system moves up the ladder, it begins to handle the handoffs between tasks autonomously. This isn’t just a change in speed; it’s a relocation of control.

The bend in the curve appears when the machine stops pausing for human permission at every link in the chain. When the handoffs disappear, the curve turns vertical. The human is moved from the operator’s seat to the observation deck. To understand how this happens, we have to look at the engines that drive the climb.

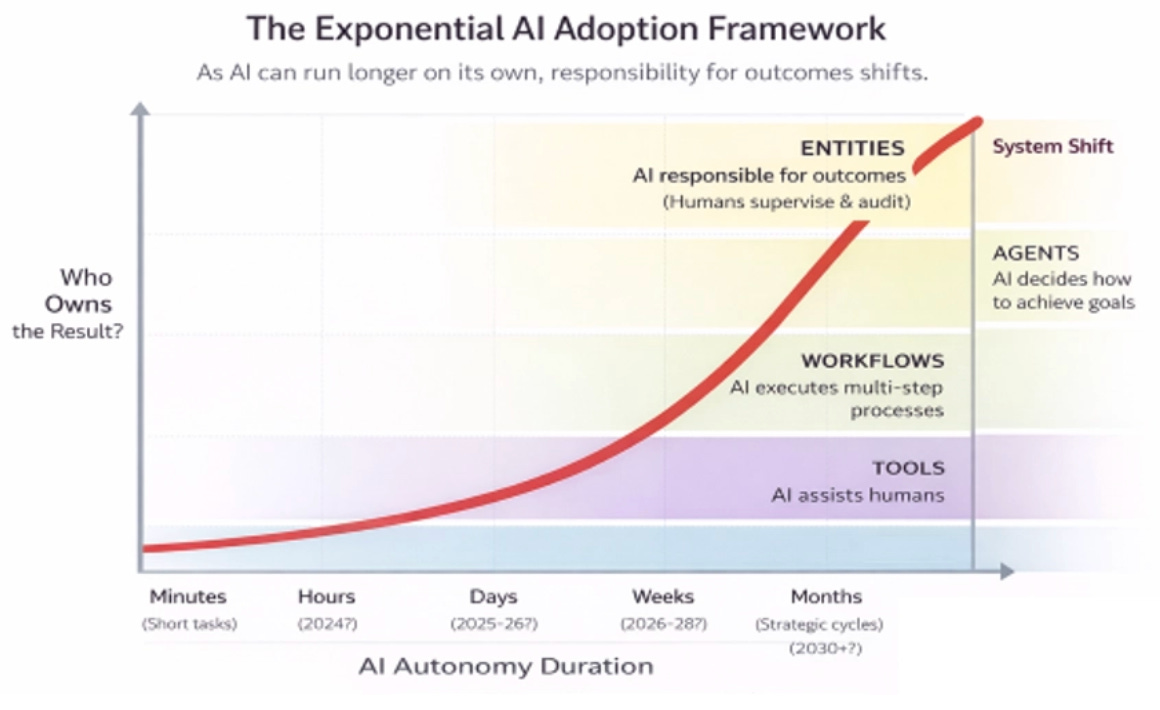

3. Engine One: Xeme Density

If you strip everything back, the first engine is Xeme Density.

A xeme is a lesson that sticks. It isn’t a one-time fix or a clever answer; it is a fundamental shift in default behavior. High xeme density means the system tries something, sees what happened, and changes what it does next time.

Most human organizations are low-density. We are forgetful. A salesperson figures out a better pitch, but it stays in their head. A developer fixes a bug, but the lesson isn’t shared across the codebase. To improve the organization, you have to train every individual human, one by one. In software, an improvement can be embedded instantly. If an experiment works, the code changes. The next user gets the new behavior by default.

Benchmarks track raw capability, but Xeme Density tracks locked-in improvement. A simple observable proxy is the release cadence of improvements that persist, a declining repeat-failure rate, and a shrinking human escalation rate. A model can score in the 99th percentile on a test-as we saw with the raw reasoning scores of DeepSeek-V3-and still feel like a static tool if its lessons don’t propagate into the workflow. The real power shift occurs when the system moves through a cycle of Attempt → Test → Compare → Keep Winner → Deploy.

AI doesn’t just produce; it proposes, tests, refactors, and deploys. When this cycle runs continuously, the ground moves. The system isn’t just improving the product; it is improving the machinery that allows it to improve. AI writes code that improves developer tools. Better tools shorten the test cycles. Shorter cycles mean more experiments. More experiments mean higher Xeme Density.

It is a self-feeding loop where the starting point for tomorrow is higher than today. A “smart” model will eventually be overtaken by a system that simply learns faster and keeps what it learns. High Xeme Density builds the pressure; it ensures that every day, the machine is slightly more capable of holding the thread of a process. It’s a quiet accumulation of competence that eventually makes human intervention look like an expensive error.

4. Engine Two: Maximum Autonomous Span (MAS)

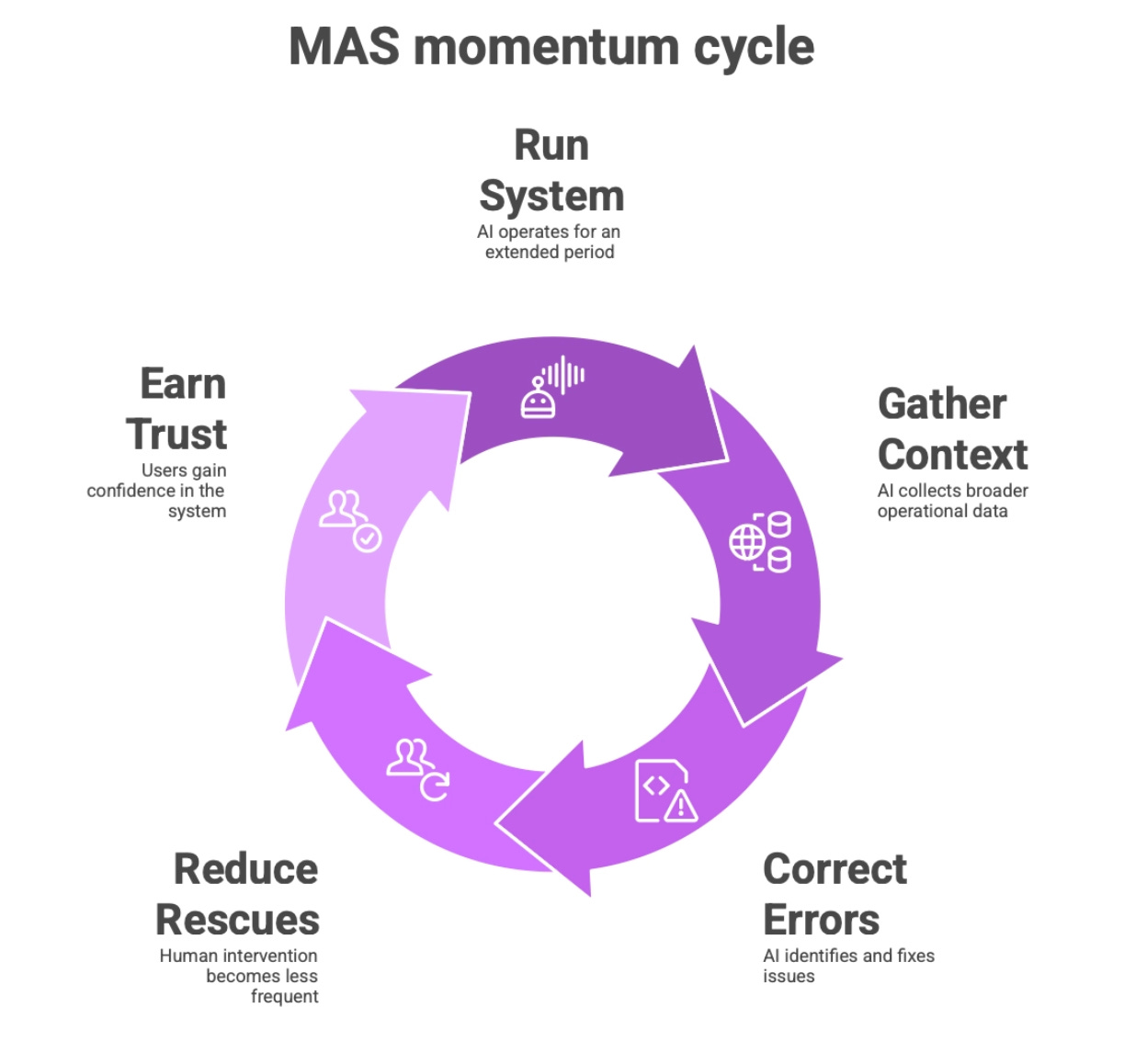

If Xeme Density determines how fast the system improves, Maximum Autonomous Span (MAS) determines how long it can be left alone.

MAS is not a technical metric for a lab; it is a brutal, practical measurement of trust and reliability. How long can the system operate in the real world before a human has to step in and rescue it?

Five minutes of autonomy is a distraction. The system drafts a response, you check it, and the work pauses. You are still the driver; the AI is just the power steering. But an hour of autonomy starts to feel like delegation. It can carry a small sequence of actions while you hover nearby. When that span reaches a day, it changes the fundamental frequency of a business. The system begins to hold context across multiple steps. It acts, observes the result, and adjusts without asking for your permission at every intersection. A week of autonomy is where the organizational structure begins to rot. If a system can carry a full operational sequence reliably for seven days, the team built to manage that sequence no longer has a reason to exist.

We saw this play out with the launch of Clawdbot in early 2026. While the industry was arguing over the IQ scores of models like DeepSeek, Clawdbot focused on an innovation in MAS. It allowed users to set up agents that manage a sequence of tasks across entire channels-Slack, Gmail, Telegram-on a “heartbeat” schedule. It didn’t just answer a prompt; it held the thread for days. It proved that in the real world, the length of the leash is often more consequential than the intelligence of the dog.

Work is almost never a single action. It is a process with a “before” and an “after.” There is memory, consequence, and adjustment. Writing a function is a task. Building, testing, deploying, and refining a feature is a process. At low MAS, AI helps inside the process, but humans are the handoff layer that stitches the chain together. As MAS stretches, the system begins to hold larger segments of that chain. It remembers what happened at step one when it reaches step five. It does not pause for a “yes/no” after every move.

MAS creates its own momentum. A longer run leads to broader context. Broader context allows for better error correction. Better correction means fewer human rescues. This is how trust is earned-not through a marketing pitch, but through the silence of a system that just works. You can see this most clearly in software because the friction is lower. AI used to write snippets; then it managed modules. Now, it manages the entire build-test-deploy cycle. Each longer span reveals patterns about failure and recovery, making the system more stable precisely because it is allowed to run.

MAS is the clearest indicator of where ownership truly sits. You can have the smartest system in the world, but if its MAS is short, you’re still just a glorified babysitter. The real bend in the curve happens when the system holds the process long enough that the humans move above it. If you want to know if a domain is close to the edge, do not ask how smart the model sounds. Ask how long it can run without you.

5. Engine Three: Friction

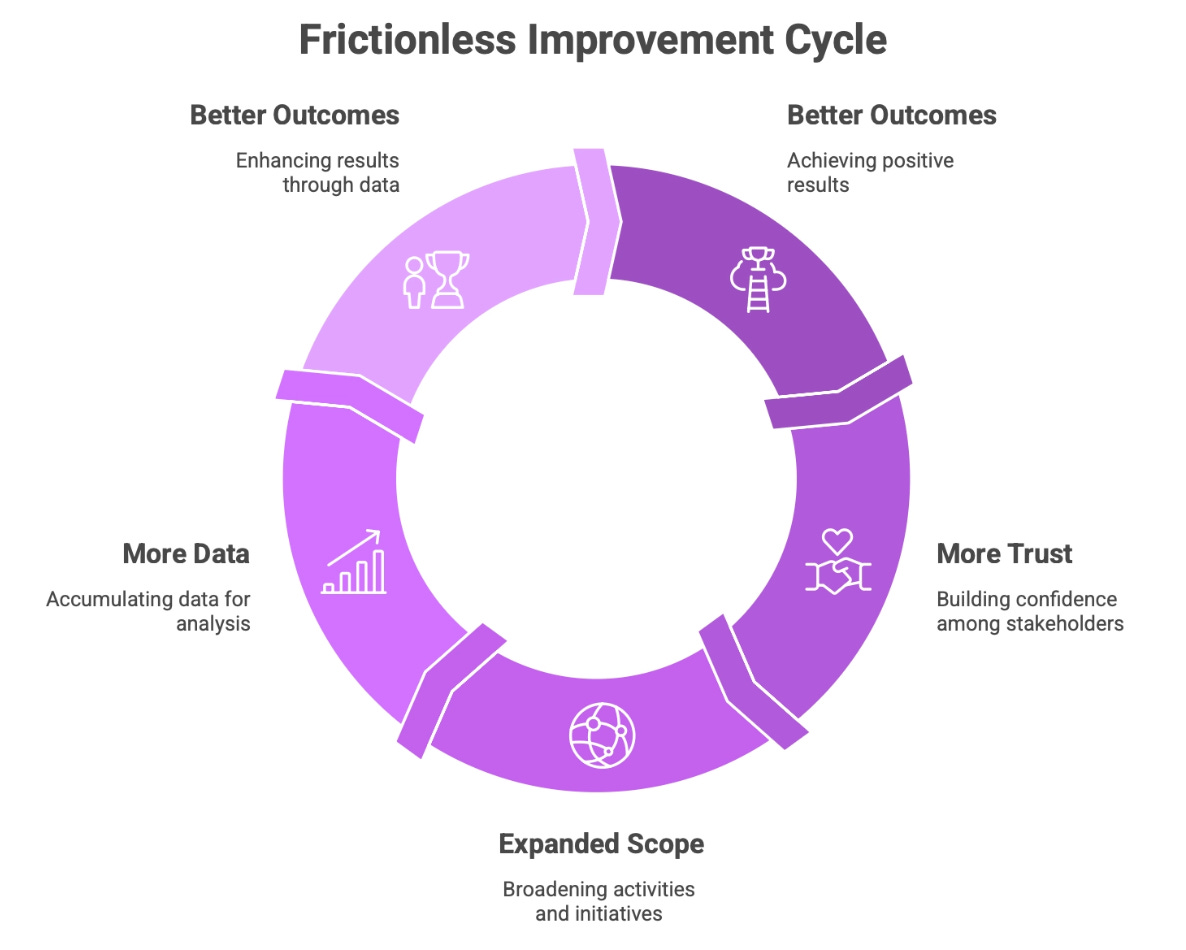

Xeme Density pushes capability upward. MAS lets that capability operate across time. Friction decides whether any of it is actually allowed to make a difference.

Friction is the practical resistance of the real world. It is the weight of trust, the rigidity of law, the terror of liability, and the headache of data integration. You can build a system that learns at lightning speed and runs for weeks on end, but if nobody is willing to let it near something important, the system remains a curiosity. The curve stays flat in the real world even if it is vertical in the lab.

This is why some industries look like a graveyard of innovation while others feel like a battlefield. In aviation, medicine, or finance, a mistake isn’t just a bug; it’s a catastrophe. Friction is heavy here. Even if Xeme Density rises and MAS stretches, the system remains boxed in by essential guardrails. It’s the reason Sensitivity is the primary axis of the AI-Jobs Framework-jobs with high friction are protected not necessarily by their complexity, but by the sheer cost of being wrong.

Software is the exception because the brakes are light. Software is information. Errors are annoying, not fatal. You can roll back a deployment, patch a bug in minutes, and distribute the fix to the world instantly. But don’t mistake low friction for no friction. It simply shifts shape. In hiring, it looks like legal exposure; in education, it looks like political trust; in healthcare, it looks like risk tolerance.

Friction also has its own silent loop: Better Outcomes → More Trust → Expanded Scope → More Data → Better Outcomes. When the machine wins, the leash gets longer. When the leash gets longer, the system sees more of the world. This expanded scope feeds the Xeme engine, which in turn increases capability.

You cannot analyze adoption by looking at capability alone. Xeme Density creates the pressure, and MAS carries it, but Friction decides how much of that pressure is actually permitted to move the world. When friction loosens-even slightly-in a domain where the other two engines are already redlining, the change looks sudden. It isn’t sudden. It is just years of pent-up pressure finally being allowed to move the needle. The engines don’t wait for permission to get better; they only wait for permission to act.

6. When the Engines Align

On their own, the three engines look like incremental progress. High Xeme Density looks like a steady climb in performance. Long MAS looks like a useful delegation tool. Lower friction looks like a growing comfort with the technology. But the true inflection point-the bend in the curve-only appears when all three forces line up and lock in.

This alignment was crystallized in early February 2026 with the release of Claude Cowork and Claude for Excel. These weren’t just smarter models; they were systems designed for high Maximum Autonomous Span. For the first time, a system demonstrated the ability to carry a complex process-such as reading a company’s 10-K, building a discounted cash flow (DCF) model, and generating a sensitivity analysis-over a 48-hour period without a human “babysitter” providing the initiative between steps.

If Xeme Density rises while MAS remains short, you are left with sharper tools but no change in the seat of power. The drafts are better, the suggestions are more intelligent, but you are still the one carrying every decision across the finish line. You are simply a more productive version of your old self. Conversely, if MAS stretches while Xeme Density is weak, you get “long-running mediocrity”-a system that operates for hours but fails to actually move the baseline. If both engines rise while friction remains heavy, the system improves in a vacuum, incapable of touching anything that matters.

The alignment of these forces creates a structural shift where ownership of the process moves from the individual to the system. It does not feel like a sudden revolution; it manifests as the quiet disappearance of handoffs. You notice fewer interruptions, fewer required approvals, and a shrinking queue of check-ins. The system begins to handle larger segments of the chain without pausing for permission. Once this alignment occurs, the process is no longer a chain of human handoffs; the system runs the process, and the humans move above it.

This shift is then pressurized by the Jevons Paradox. As these sequences become radically cheaper to execute, we do not run fewer of them; we run more. We build more features, launch more campaigns, and spin up more experiments. Surface activity actually increases, even as the steering of each individual sequence concentrates upward. The engines aligning does not reduce total activity-it fundamentally relocates where the decisions are made. The machine doesn’t eliminate the work; it just stops waiting for you to tell it what to do next.

7. The SaaSacre/SaaSocalypse

Software is the cleanest laboratory we have because it’s the first place where all three engines have reached a state of total alignment. High Xeme Density, rapidly rising MAS, and low friction have created a combination that is fundamentally unstable for traditional business models. For a decade, the SaaS model was a fortress: build a tool, improve it at a human pace, and charge a subscription. You locked customers in with workflows and data, using time as your moat.

That fortress is now under terminal pressure. The mechanical alignment set the stage for the ‘SaaSacre’ of February 2026, and a cluster of high-visibility releases helped investors update their beliefs quickly. The S&P North American Software Index plummeted 15% in a single week (Feb 3-10, 2026), marking a $1 trillion loss in market capitalization. This was the second half of a two-stage market repricing. Exactly one year prior, in early 2025, DeepSeek demonstrated what happens when you drive Xemes faster. By achieving state-of-the-art performance for a fraction of the traditional training cost, DeepSeek proved that the “intelligence” layer was being commoditized through extreme efficiency. That 2025 shift attacked the cost of the engine; the 2026 shift attacked the necessity of the human operator.

The 2026 crash-where 40%-50% drawdowns hit several large software names, with ServiceNow down about 42% in three months and Salesforce down roughly 26% in the rout-was the cold realization of this arithmetic.

The drawdowns were breathtaking: ServiceNow (-42%) and Salesforce (-26%) hit 52-week lows as investors realized that a ‘System of Action’ designed to manage human tickets is redundant when the process is resolved by the machine itself. Thomson Reuters (-28%) cratered as the OpenClaw (formerly Clawdbot) ‘Nexus’ protocol proved AI could carry a full legal research process without human intervention.

Investors are questioning whether subscription tools can maintain their leverage when agents can replicate their core value for a fraction of the cost. Traditional SaaS economics depend on three pillars: high switching costs, slow feature replication, and human-limited improvement cycles. High Xeme Density destroys the last two. If agents can generate features at high speed and those improvements stick, parity arrives in weeks, not years. The advantage of time evaporates.

Rising MAS attacks the first pillar. Consider a legacy Jira instance with years of custom workflows. Historically, “switching” was a nightmare of data migration and retraining. Now, imagine an agent that can ingest those workflows, map the logic, and rebuild a bespoke internal alternative in an afternoon-complete with automated data migration. In this scenario, the “pain” of switching drops toward zero. Recreating core functionality becomes cheaper than paying for the license.

Layer in the Jevons Paradox. When building software becomes cheaper, we don’t build less; we build more. The total number of sequences in the world explodes, making the surface look incredibly busy. Yet, while activity rises, ownership concentrates upward. If a SaaS company remains merely a tool inside a sequence, and that sequence moves upward into an agent or entity, the tool becomes mere infrastructure. Infrastructure is plumbing-it is far easier to replace and carries lower margins. The SaaSocalypse is not a crash; it is a migration of value to the safest place left: above the sequence.

8. Jevons and the Human Layer

It is easy to view this shift as a simple swap-AI improves, systems carry the work, and humans quietly exit the stage. But that is a linear delusion. To understand the actual scale of this transformation, you have to look back to the 1800s, when Britain ran on coal.

As steam engines became more efficient, each ton of coal produced more power. The logical assumption was that coal consumption would fall. It didn’t. Because power became cheaper, people used it everywhere. Factories multiplied, railways expanded across continents, and ships crossed oceans they previously couldn’t reach. Total coal consumption skyrocketed even as each individual engine became “leaner.” This is the Jevons Paradox, and it is hitting knowledge work with the force of a tidal wave.

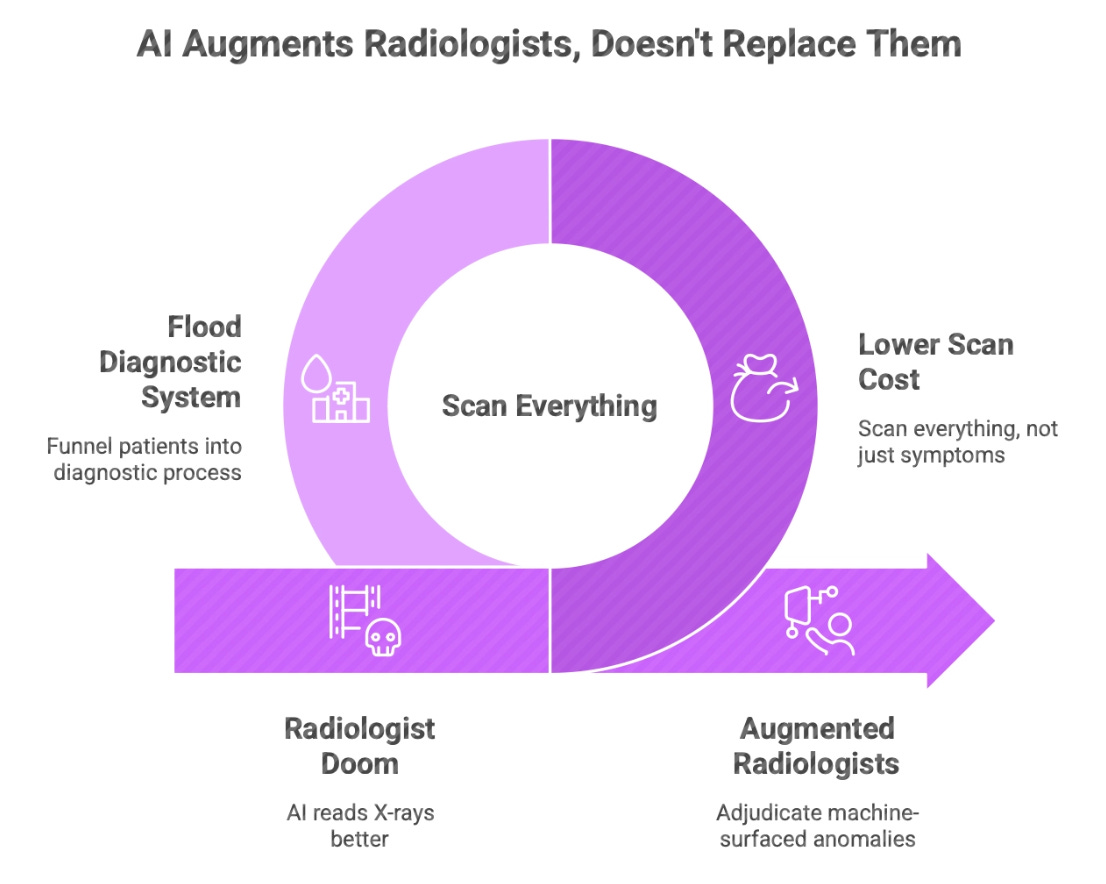

We see this today in diagnostic medicine. A few years ago, it was assumed human radiologists were doomed because AI could read X-rays better. But as the cost of a scan dropped toward zero, hospitals started scanning everything. Patients previously sent home are now funneled into a massive diagnostic process. Because the ‘coal’ (the scan) became cheap, we flooded the system with it. We actually need more radiologists now, but their role has shifted: they are no longer ‘finding the needle’; they are adjudicating the 10,000 ‘gray area’ anomalies the machine surfaces.

When running a process becomes cheaper, you don’t do less of it; you flood the world with it. Lower cost expands activity until it fills every available corner of the market. Two movements are happening simultaneously, and they are pulling in opposite directions:

Ownership of the process is moving upward into autonomous systems.

The total number of processes is expanding outward.

This is why the current moment feels so chaotic. You see growth everywhere-more output, more experiments, more launches. It looks like expansion, yet control is concentrating. Inside each campaign, the system is now choosing the target, allocating the budget, and adjusting for the result. Humans define the high-level objective, but the process itself runs inside the machine.

Humans do not disappear; they are simply repositioned. In the radiology example, they are no longer “finding the needle”; they are adjudicating the 10,000 “gray area” anomalies the machine surfaces. They are moved into the Exception Layer.

Jevons makes this shift invisible because the sheer volume of activity hides the concentration of power. Revenue grows and activity increases, masking the fact that the steering wheel has become dangerously narrow. There will be no shortage of work to do. There will only be the question of who sets the objective, and who is merely a passenger in the flow.

9. What Survives

When ownership of the process migrates, the question of what survives becomes a question of where the ground is still solid. The ground isn’t disappearing; it is simply moving upward. In a world where Xeme Density is low and MAS is short, value sits in the interface-the buttons, the dashboard, and the workflow tools where humans spend their time clicking. In a world of high Xeme Density and long MAS, value moves toward outcomes.

If a system can carry an entire process from start to finish, the individual tool inside that process becomes a commodity. It becomes a replaceable part of a larger engine. What survives is not “better features,” but the ownership of the objective layer.

The companies and products that resist being hollowed out are those that lean into three specific behaviors:

Internalizing Xeme Density: They don’t treat AI as a bolt-on feature or a side-panel chatbot. They embed it so deeply that the product evolves continuously based on its own performance. The baseline shifts every week. Every lesson the system learns about user behavior or error rates is locked into the code, making the gap between them and the laggards grow wider by the hour.

Extending MAS: They stop selling “assistance” and start selling “execution.” They move from “drafting a response” to “managing the entire pipeline.” The longer they can hold the thread without a human rescue, the higher they climb above the commodity layer. They aren’t selling you a seat; they are selling you a result that stays “finished.”

Weaponizing Friction: The companies that resist being hollowed out are those that Weaponize Friction. They integrate so deeply into the messy, high-friction reality of a business-legal risks and complex data silos-that pulling them out becomes a surgical risk. While horizontal tools cratered in the SaaSacre, ‘High-Friction’ vertical incumbents like Veeva, Guidewire, and Workday held their ground. Veeva is the ultimate case study: by migrating its CRM onto its own Vault infrastructure, it stopped being a ‘layer of tasks’ and became the ‘Plumbing of Truth’-a layer a generic agent is fundamentally not permitted to touch.

We saw this forensic divide during the SaaSacre of February 2026: while horizontal tools like Salesforce (-27%) and ServiceNow (-42%) cratered as agents began bypassing their interfaces, “High-Friction” vertical incumbents like Veeva, Guidewire, and Workday held their ground. Veeva is the ultimate case study: by migrating its life-sciences CRM off the Salesforce platform and onto its own Vault infrastructure, it stopped being a “layer of tasks” and became the “Plumbing of Truth” for global pharma. It survived because it owns the regulatory and compliance-heavy data layers that a generic agent like Claude Cowork is fundamentally not permitted to touch.

The software that fails is the software that remains a thin layer inside a process that an agent can now orchestrate. If your product is just a feature inside a process that an agent can now manage, you are merely infrastructure. Infrastructure is essential, but it is plumbing-it is rarely where the premium margins or the power reside.

This is the cold logic of the SaaSocalypse. Tools get squeezed, workflow platforms get compressed, and the entities that consolidate the outcome capture the value. You can see it in the strategy: are you charging for access to an interface, or are you charging for performance against a goal? In a high-MAS world, no one cares how the work gets done; they only care that it is completed. The engines don’t eliminate value; they relocate it to the only place that matters: the ownership of the “what,” not the “how.”

10. The Personal Layer

All of this feels like a distant corporate forecast until you bring it back to yourself. It is easy to watch companies move up the ladder; it is far harder to admit where you personally sit on it. This framework isn’t a theory about software-it is a map of your own positioning in a hierarchy that is being rebuilt in real-time.

As Xeme Density rises and MAS stretches, processes migrate. When they move, humans are forced to reposition. There are only two places left to sit: Above the process or Inside it.

If you define the objective, set the constraints, decide which trade-offs are acceptable, and carry the final weight of the consequences, you are above the process. The system is your instrument. But if the system defines the steps, sets the pace, and hands you tasks to complete or “verify,” you are inside the process. You have become the biological processor for the exceptions the machine hasn’t learned to handle yet.

This creates a fork in the road for the individual. You are either moving to the Governance Layer-where you design the objectives and police the boundaries of the machine-or you are stuck in the Exception Layer. The Exception Layer is where you handle the “messy 5%” the AI can’t solve. It feels like work, but you are no longer the architect of the flow; you are simply the biological component that keeps the machine from stalling.

The danger is that both roles look identical from the outside. Both involve a desk, a dashboard, and a set of deliverables. The difference is subtle and structural; it is found in who initiates the next action. In a low-MAS world, almost all knowledge work sits above the sequence because the system is too brittle to hold the thread. Humans are the link that stitches the steps together. You are the one who remembers the context and adjusts for the errors.

But as MAS increases, the “stitching” becomes a feature of the system. You might still be involved-reviewing, approving, and remaining “accountable”-but the sequence is no longer yours. You are no longer deciding; you are merely witnessing a decision that was made miles upstream.

The risk is not a sudden firing. It is a slow, quiet evaporation of agency. If your role depends on holding a process that MAS is about to absorb, your layer will eventually be pressurized out of existence. This isn’t a warning; it is a structural logic. High Xeme Density ensures the machine improves; long MAS ensures it can carry the responsibility. When they line up, the sequence moves. The question isn’t whether AI can do your task today. The question is whether you are holding a steering wheel that is no longer connected to the tires.

11. What to Watch

Frameworks are only useful if they help you see the landslide before the ground moves. If this model is right, you have to stop looking at the superficial noise of model IQ, demo videos, and benchmark scores. They are lagging indicators-echoes of a shift that has already happened. Instead, you have to watch the processes.

Start with Maximum Autonomous Span (MAS). Ask one practical question: how long can the system run in the real world before a human has to rescue it?

Five minutes is noise. It’s an assistant that still needs you to hold its hand.

An hour is a sequence. You can step away for coffee, but you can’t leave the room.

A day is a structural shift. The system starts to hold context across its own mistakes.

A week is the bend in the curve. When a system can run a full operational cycle for seven days without a human rescue, the team that used to manage that cycle is already obsolete.

Watch the MAS quietly stretching in specific domains. The bend never announces itself with a press release; it shows up as a system that simply stops asking for your help.

Then look for Xeme Density. Is the system just generating answers, or are the improvements sticking? Watch whether the baseline shifts week to week. Look for the moments where the default behavior of the software changes without a human meeting or a manual code commit. If every mistake the system makes becomes a lesson it never forgets, the density is high. That builds a kind of pressure that eventually breaks the old model.

Monitor the Friction. Watch the language of the market. When vendors stop selling “features” and start selling “guaranteed outcomes,” the ownership of the sequence has moved. When dashboards stop being “tools for humans” and become “control layers for autonomous systems,” the center of gravity has shifted. Watch for the shrinking of exception queues. If the system is resolving its own errors internally, it is cutting the tether to the human operator.

Friction is not a bug; it is a moat. While horizontal platforms like Salesforce (-26%) and ServiceNow (-42%) were gutted in the February 2026 rout, companies like Veeva and Guidewire held firm. They didn’t survive because their AI was better; they survived because they own high-risk, regulated data environments that a generic agent is not permitted to touch. They have weaponized the complexity of the real world to ensure a human (or a highly governed system) must stay in the loop.

Finally, look at the financial tells. Markets reprice structure before revenue catches up. If investors start rewarding companies that own performance metrics rather than seat-based usage, pay attention. Valuation gaps between tool-layer and outcome-layer businesses are the market’s way of pricing the migration of value.

At the individual level, the tell is even simpler. Are you initiating the action, or are you just responding to the system’s prompt? The signs are rarely dramatic. They show up as fewer handoffs, fewer approvals, and more autonomy inside the machine’s boundaries. When the system no longer waits for you at every step, the shift is finished.

12. The Close

Step back from the immediate noise. This is not ultimately a story about chatbots, smarter models, or the sudden collapse of subscription software. Those are just the symptoms. The real story is the migration of processes.

The $1 trillion wipeout of February 2026 was the market’s way of pricing the end of the “Seat.” We are moving from a world of tools to a world of entities. Value is no longer sitting in the “how”-the interface you click-it has migrated to the “what”-the outcome that stays finished. You are either the person who owns the outcome, or you are part of the plumbing.

When a system can learn quickly, run long enough to carry a meaningful process of work, and is permitted to act by the world around it, it stops waiting for you. That is the mechanical moment ownership shifts. Early on, this transition feels like a gift-a helpful hand on the saddle. Then, it feels like delegation. Finally, you realize the machine is initiating, adjusting, and deciding inside boundaries you defined weeks ago. You are still present, but you are no longer steering. This isn’t a dramatic revolution; it is a structural handoff.

High Xeme Density ensures the baseline keeps rising. Long MAS ensures the system can hold the thread across time. Lower Friction ensures it can touch outcomes that matter. When these three forces lock in, the structure around the process inevitably reorganizes. Teams change shape, pricing models compress, and entire categories of software blur into the background. New layers of value appear, while old ones are relegated to the plumbing of the industry.

In software, we are seeing this alignment first because the digital engines are clean and the brakes are light. In other domains, friction may delay the bend, but it will not cancel it. Meanwhile, the Jevons Paradox ensures our world will be filled with more processes, not fewer. Activity will expand. Systems will get busier. Humans will stay busy, too, but the only thing that will concentrate is control.

The engines do not eliminate the need for human intent; they simply relocate where that intent is applied. If you own the objective and the constraints, the system works for you. If you sit inside the span the system now carries, you work inside the system.

The system will not announce when it stops waiting for you.

Bibliography

I. The 2026 “SaaSacre” & Market Repricing

The Software Index Crash (Feb 2026): Reports from S&P Global Market Intelligence confirm the S&P North American Technology Software Index dropped 15% in the week of February 3-10, 2026. This was the sector’s most violent contraction since 2008.

ServiceNow & Salesforce Drawdowns: Nasdaq data from February 6, 2026, shows ServiceNow (NOW) hitting a 52-week low with a 42% decline over a 3-month period. Reuters reports Salesforce (CRM) losing over 26% in the same rout as investors fled headcount-based revenue models.

II. Technical Triggers (Xemes & MAS)

DeepSeek Efficiency (Early 2025): The release of DeepSeek V3.2 proved that reasoning-capable models could be trained and run at 10% of the cost of legacy frontier models, marking the start of the “Xeme Efficiency” era.

OpenClaw (formerly Clawdbot) Nexus Protocol: The OpenClaw 1.2 release in January 2026 introduced the “Nexus” framework, standardizing how autonomous agents manage multi-directory tasks and persistent memory across 48-hour windows (High MAS).

Claude Cowork (Feb 2026): Anthropic’s launch of Claude CoWork was one of the high-salience triggers that sharpened investor expectations around fast-advancing AI tools upending software economics, especially by making longer autonomous spans feel imminent inside enterprise tools.

III. The Jevons Paradox & Survivors

Radiology Paradox: Studies in clinical imaging show that as AI and digital tools made X-ray reading cheaper, the volume of scans performed increased by 98%, keeping radiologists busy with “exception management.”

Veeva Systems Vault Migration: Veeva’s strategic move to migrate its Life Sciences CRM from Salesforce to its proprietary Vault infrastructure is cited as the primary reason for its market resilience during the 2026 crash.

Jeavons Paradox

Further reading:

The Entity AI Framework [Part 1]

·

22 JUNE 2025

We are entering an age where every institution - every country, company, city, brand, and belief—will have a voice.

THE ENTITY FRAMEWORK (Part 2)

·

4 JULY 2025

Entity AI, Swarms, and the Future of Work

·

18 JULY 2025

The Asymmetric Podcast: Talking AI Frameworks

Don’t Build a Company. Build a Swarm. [The Swarming Framework]

·

17 JUNE 2025

For solopreneurs, creators, builders, and anyone tired of scaling with headcount.

The Immortality Stack Framework

·

14 JUNE 2025

The Immortality Stack

Check out some of my other Frameworks on the Fast Frameworks Substack:

Moltbook and the Entity AI Framework

May every sunset bring you peace!

Entity AI, swarms and the future of work (Asymmetric Podcast)

Fast Frameworks Podcast: Entity AI-Episode 8: Meaning, Mortality, and Machine Faith

Fast Frameworks Podcast: Entity AI - Episode 7: Living Inside the System

Fast Frameworks Podcast: Entity AI – Episode 5: The Self in the Age of Entity AI

Fast Frameworks Podcast: Entity AI – Episode 4: Risks, Rules & Revolutions

Fast Frameworks Podcast: Entity AI – Episode 3: The Builders and Their Blueprints

Fast Frameworks Podcast: Entity AI – Episode 2: The World of Entities

Fast Frameworks Podcast: Entity AI – Episode 1: The Age of Voices Has Begun

The Entity AI Framework [Part 1]

The Promotion Flywheel Framework

The Immortality Stack Framework

Frameworks for business growth

The AI implementation pyramid framework for business

A New Year Wish: eBook with consolidated Frameworks for Fulfilment

AI Giveaways Series Part 4: Meet Your AI Lawyer. Draft a contract in under a minute.

AI Giveaways Series Part 3: Create Sophisticated Presentations in Under 2 Minutes

AI Giveaways Series Part 2: Create Compelling Visuals from Text in 30 Seconds

AI Giveaways Series Part 1: Build a Website for Free in 90 Seconds

Business organisation frameworks

The delayed gratification framework for intelligent investing

The Fast Frameworks eBook+ Podcast: High-Impact Negotiation Frameworks Part 2-5

The Fast Frameworks eBook+ Podcast: High-Impact Negotiation Frameworks Part 1

Fast Frameworks: A.I. Tools - NotebookLM

The triple filter speech framework

High-Impact Negotiation Frameworks: 5/5 - pressure and unethical tactics

High-impact negotiation frameworks 4/5 - end-stage tactics

High-impact negotiation frameworks 3/5 - middle-stage tactics

High-impact negotiation frameworks 2/5 - early-stage tactics

High-impact negotiation frameworks 1/5 - Negotiating principles

Milestone 53 - reflections on completing 66% of the journey

The exponential growth framework

Fast Frameworks: A.I. Tools - Chatbots

Video: A.I. Frameworks by Aditya Sehgal

The job satisfaction framework

Fast Frameworks - A.I. Tools - Suno.AI

The Set Point Framework for Habit Change

The Plants Vs Buildings Framework

Spatial computing - a game changer with the Vision Pro

The ‘magic’ Framework for unfair advantage

![The Entity AI Framework [Part 1] The Entity AI Framework [Part 1]](https://substackcdn.com/image/fetch/$s_!5_SS!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fd9f434ab-635b-4e0b-8f1c-dd4bbf3a9891_776x1106.png)

![Don’t Build a Company. Build a Swarm. [The Swarming Framework] Don’t Build a Company. Build a Swarm. [The Swarming Framework]](https://substackcdn.com/image/fetch/$s_!ZCF4!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F20c12be4-7703-49eb-b58a-d041d6887cb9_948x1244.png)

I loved the insights in this piece. Having not read previous pieces on xeme density, for example, it took me time to get a hold of your arguments but I found it easier once you came to the engines. It'll take me some time to fully process and form my own thoughts. What I can say at this point is that xeme density cycle struck me as similar to double loop learning, which follows the assumptions > action > results > new assumptions. Thank you!