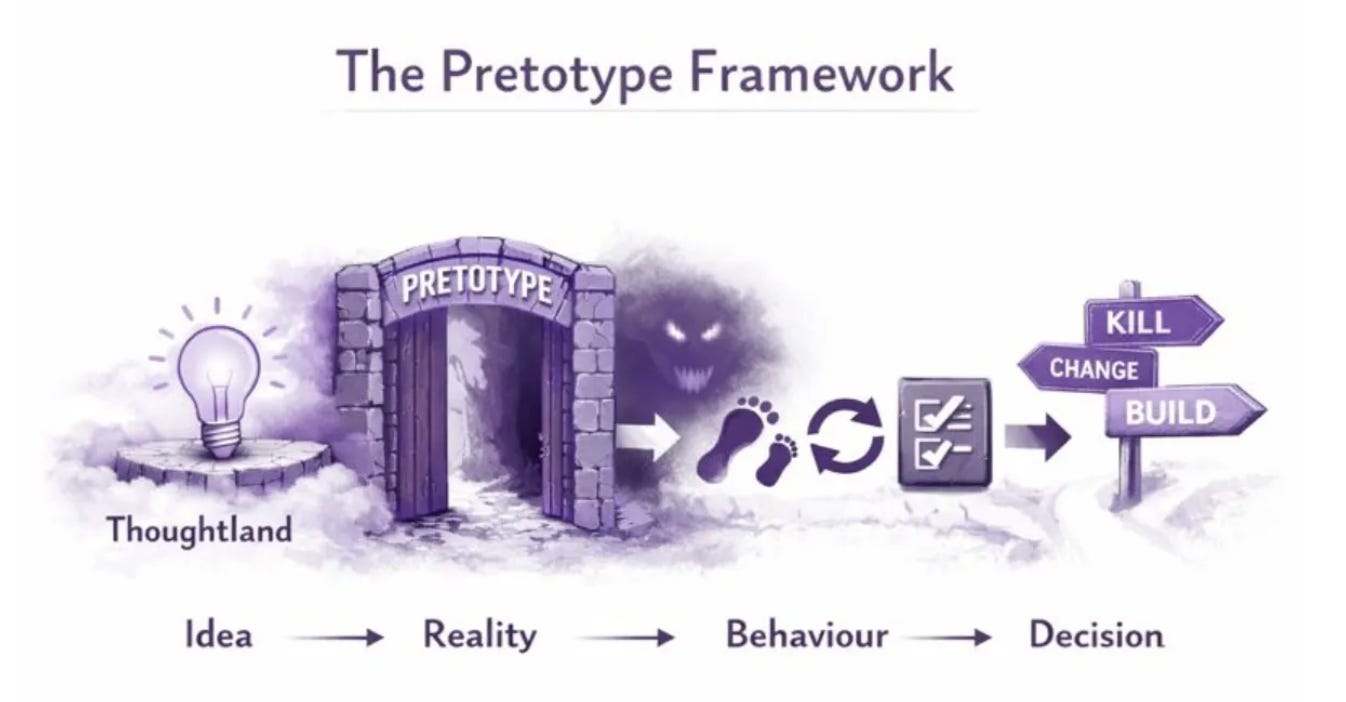

The Pretotype Framework

Make sure you are building the right 'it' before you build it right

TL;DR

Most new ideas fail, even when they are executed well. The most common mistake is committing early to the wrong idea and then learning expensively.

Pretotyping exists to answer one question only: does this it deserve to exist at all?

It does that by forcing behaviour early, before costs build up, teams form, identities attach, incentives lock in, and growth systems amplify the wrong thing.

In an AI-first world, that amplification happens faster and with less friction than ever, so it is even more important to get it right early.

Pretotyping is about creating the right xemes early, while changes are still cheap.

It is how you kill the wrong idea early enough that it reads as learning, not a write-off.

I first came across the concept of pretotyping when I met Alberto Savoia, the inventor of the concept during a visit to Google more than a decade ago.

While I understand the framework and have used it many times in the years since, I still fall into the trap of ‘thoughtland’ - spending resources, time and money on spinning my wheels on incremental improvements to my ideas, while missing the point that no one wants the thing I’m building.

AI improves the odds of falling into this trap - It lowers the cost of building, raises the quality of illusion, and makes it dangerously easy to confuse plausibility with demand.

To understand pretotyping, lets call whatever we are building ‘it’

What is an “it”

An ‘it’ is not a product.

It is the thing before the product.

It is the idea that keeps resurfacing in meetings.

The feature someone is convinced users will love.

The startup concept that sounds right when you explain it out loud.

The AI use case that feels obvious once a model can do the task.

The automation you are drawn to because it is now technically possible.

An ‘it’ is a hypothesis that underpins the essence of your idea,

We will use ‘it’ as a noun. Plural: ‘its’.

Every product, company, feature, agent, workflow, or system starts life as an ‘it’.

Most ‘its’ are wrong. That is how the real world works.

The danger is not that ‘its’ are wrong. The danger is that we let them become important before they have earned their survival.

Once an ‘it’ attracts resources, status, or identity, the escalating layers of sunk cost – time, team, narrative, reputation, identity – make killing it feel like personal loss rather than learning. In an AI world, the climb happens faster. You can build more, demo more, and persuade more people before reality has had a chance to object.

Pretotyping exists to keep ‘its’ mortal until they have earned the right to live.

The law of failure

Most new ‘its’ fail. They fail even when execution is good.

Execution quality is a weak early signal. In an AI-first environment it is an even weaker one. Models can write clean copy, generate fluent UX, simulate conversation, and produce credible outputs long before anyone actually needs the thing. What looks like strong execution may just be a good prompt and a forgiving demo context.

Most innovation still fails for the same reason it always has. The premise is wrong. The market does not want the thing often enough, badly enough, or repeatedly enough to justify what follows. And even if it does, it is not willing to pay the price, put in the effort or use the thing again.

You cannot repeal this law. You cannot out-compute it.

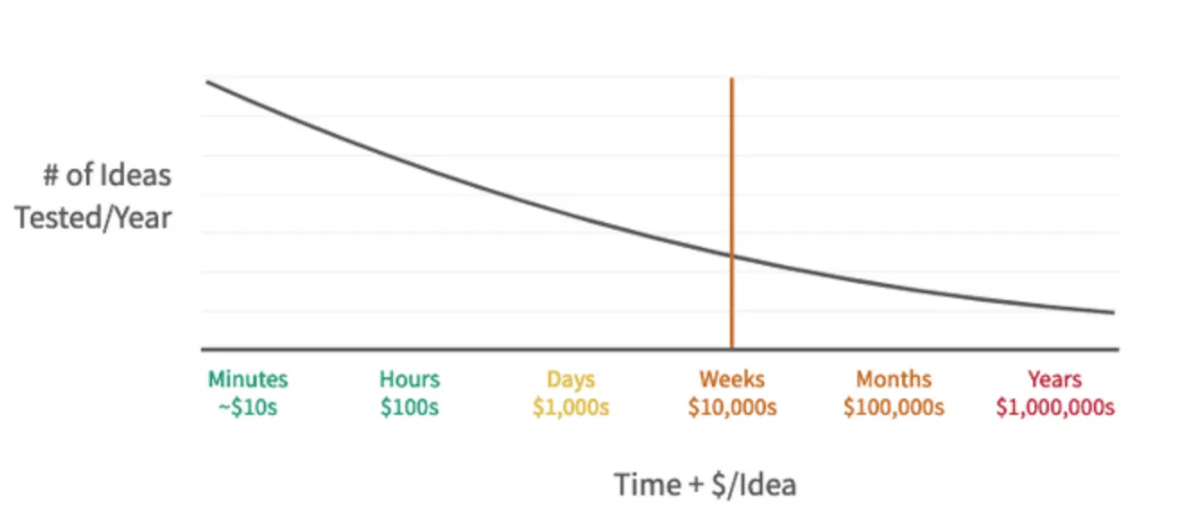

You get to decide when you want to pay for learning. Pretotyping pays early, when the bill is small.

Thoughtland

Most ‘its’ and their creators spend far too long in Thoughtland.

Thoughtland is where ideas live safely. Slides are built. Opinions accumulate. Smart people argue both sides. Confidence grows. Meetings happen. Doubt grows. Everyone feels thoughtful and busy. Whiteboards get scribbled on. Nothing real happens.

AI supercharges Thoughtland. You can generate infinite variations, scenarios, personas, and forecasts without ever leaving the room. You can argue both sides better than before. You can produce artefacts that look like progress. None of this forces behaviour to show up.

Thoughtland produces two errors. Sometimes it produces false negatives: ideas that might have worked are abandoned because opinion overwhelms evidence. More often, it produces false positives: the idea gathers enough supportive output, narrative, or internal conviction that people commit, long after a cheap test would have shown the premise was weak.

False negatives hurt pride.

False positives cost years.

Pretotyping is biased toward avoiding false positives. In an AI world, that bias is very important.

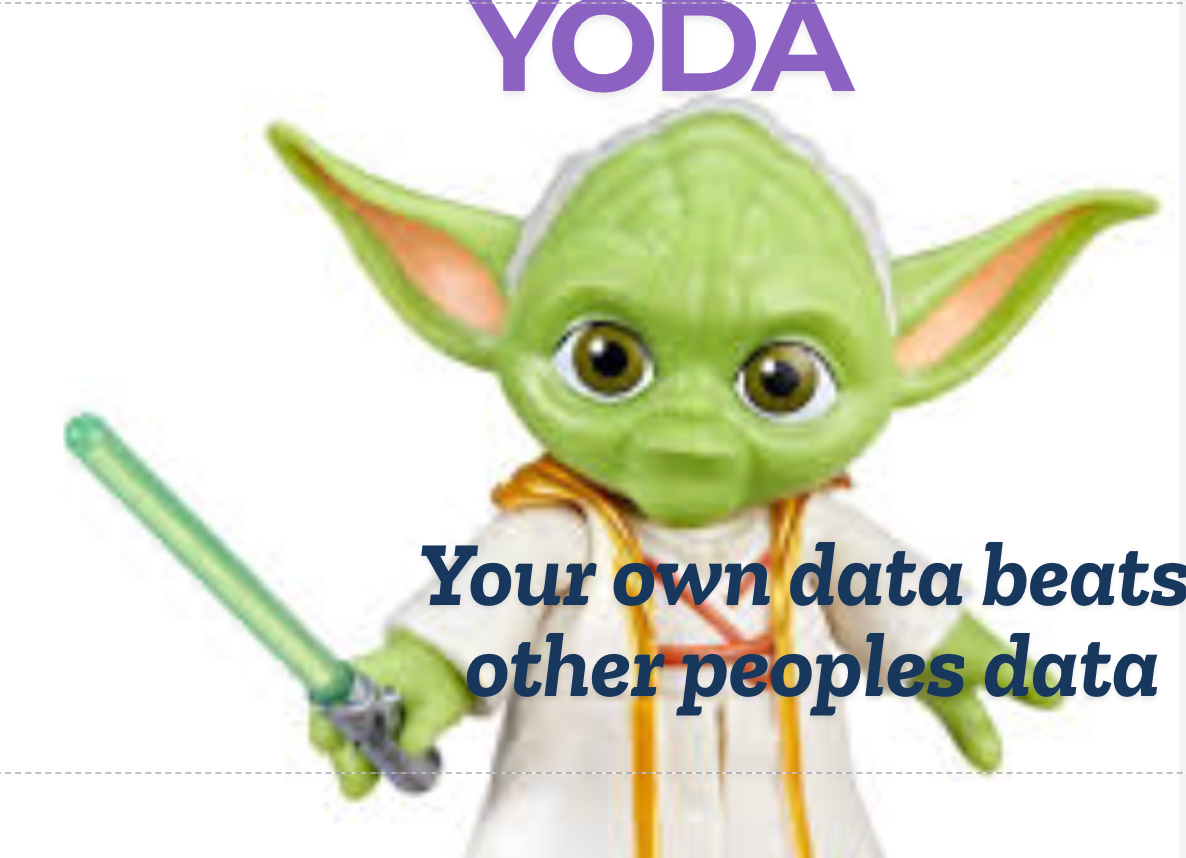

YODA: Your Own Data Beats Other People’s Data

Thoughtland feels productive because it is rich in other people’s data. Slides, benchmarks, case studies, expert opinions, AI-generated analysis, and confident projections all accumulate quickly. None of it belongs to your ‘it’.

Pretotyping is built on a simple rule: your own data beats other people’s data.

Your own data means behaviour generated by your ‘it’, in front of real users, under real constraints. It is local, messy, incomplete, and often disappointing.

Other people’s data is useful later. It helps with execution, optimisation, and scale. Used too early, it becomes a substitute for reality. AI dramatically increases the volume and polish of other people’s data. It does not make it more relevant to your specific premise.

Pretotyping is the discipline of refusing to let other people’s data answer a question that only your own data can resolve.

Three ways to deal with an ‘it’

When you have an ‘it’, there are only three honest paths.

You can do nothing with it.

You can productype it.

Or you can pretotype it.

Doing nothing feels safe and kills many good ideas.

Productyping feels heroic. By productype I mean a prototype with commitment: staffing, roadmaps, narratives, internal momentum, and increasingly, AI infrastructure that makes reversal harder than it looks. When it works, it looks decisive. When it fails, it creates long, slow unwinding.

Pretotyping is the uncomfortable middle. It says: I don’t trust my intuition, my models, or my demos enough to go all-in, but I respect the idea enough to let reality speak.

Pretotyping vs prototyping

A Pretotype answers one question:

If this existed in some crude form, would anyone actually use it?

Prototyping asks different questions.

Can we build it?

Will it work?

How good can it be?

What will it cost?

How will people behave once it exists at scale?

AI collapses the cost of answering the second set of questions. That is why the first question gets skipped. Teams rush from “the model can do this” to “we should build it” without earning the premise. Growth loops, swarms, funnels, and agents then amplify something that should never have survived first contact with reality.

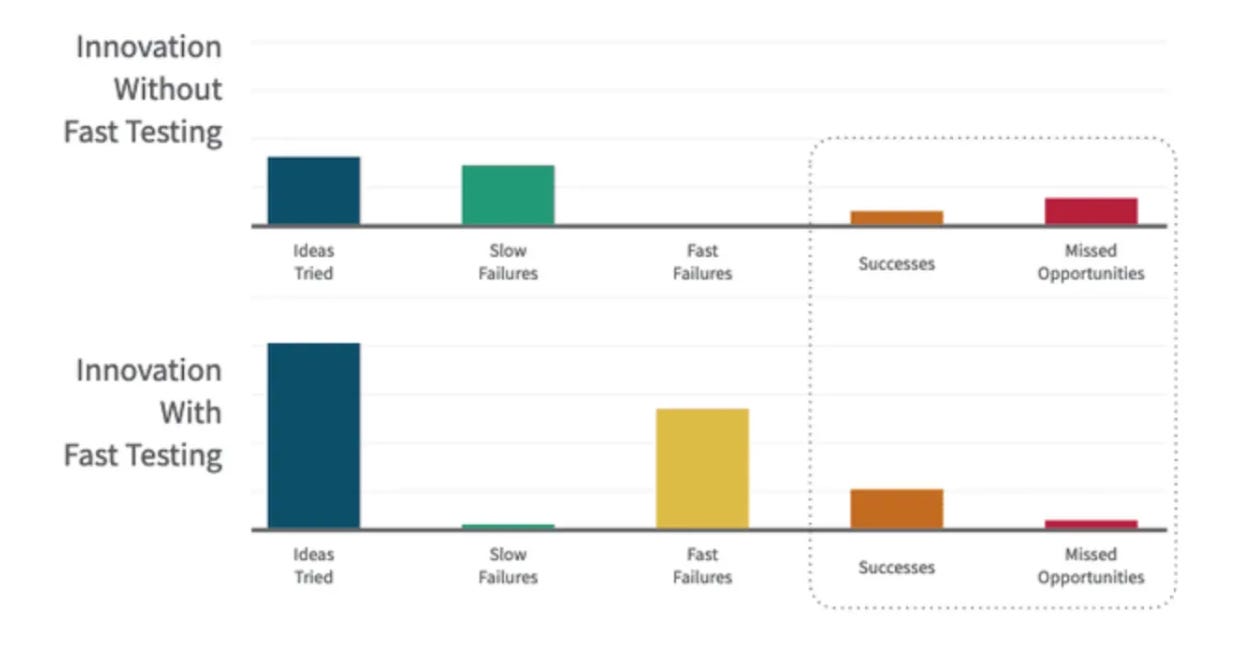

chart from pretotyping.org

Pretotyping exists to prevent expensive self-deception in a world where self-deception has become cheap.

Pretotyping as early xeme creation

Seen through the Xeme Framework, pretotyping is about creating early xemes that define whether further xemes are warranted in the current direction, or whether we should change direction.

A xeme is learning earned through real behaviour and embedded into what the system does next. Pretotyping pulls xeme creation forward in time, before architectures, incentives, prompts, agents, and narratives. Each pretotype forces specific behavioural xemes to show up: do people act at all, do they come back, do they trust the system, do they delegate judgment, does behaviour survive repetition once the novelty of “AI did it” fades.

Once you start prototyping, scaling, or swarming, you still create xemes, but they are expensive ones. In AI systems, bad xemes with false positive/negative learnings can get embedded into workflows, defaults, and training data. Killing them late hurts more than it looks.

chart from pretotyping.org

AI changes one important thing in pretotyping: you can now run several pretotypes in parallel. Different promises, different framings, different levels of autonomy, all tested against the same underlying it. Convergent behaviour across parallel tests is a stronger signal than any single success.

Pretotyping cares about xeme quality at the key early moment that can change the whole future trajectory through aiming better.

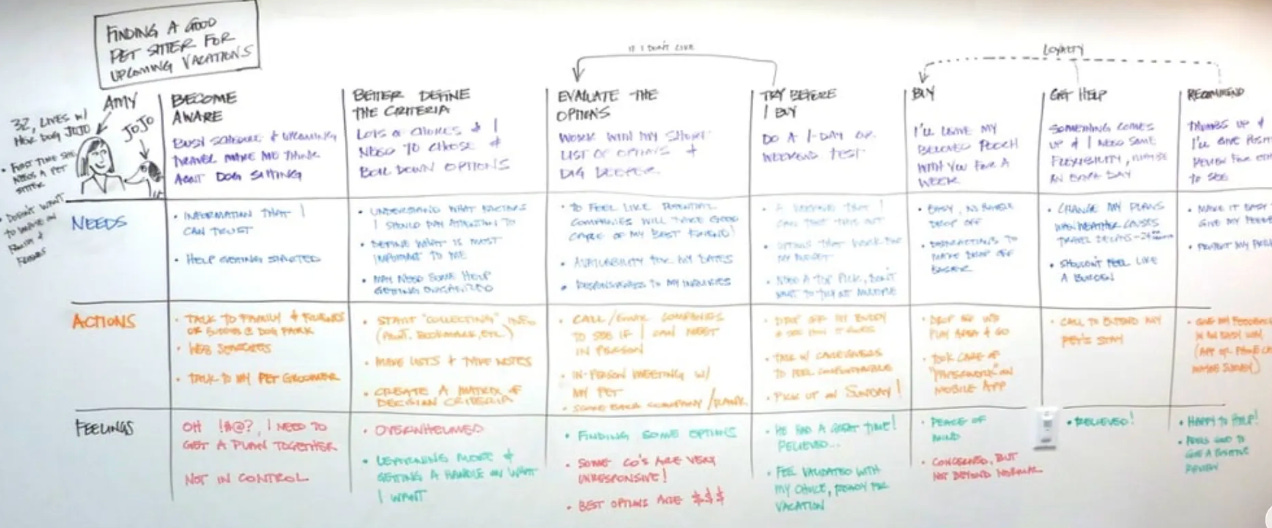

What pretotyping is actually trying to learn

Pretotyping stays deliberately narrow. It is not trying to answer everything. It is trying to answer just enough to decide whether to proceed.

Initial Level of Interest (ILI) asks whether there is any real pull at all.

ILI = actions taken ÷ opportunities offered

The ratio only means something if the action carries real intent for that category. A click is intent for a newsletter. A click is curiosity for an AI demo. In practice, you choose the smallest irreversible or effortful action that still implies real intent: a deposit, a booking, a waitlist with friction, a referral, a willingness to try it twice, or a willingness to hand over real data rather than synthetic examples.

Ongoing Level of Interest (OLI) asks whether interest survives novelty. Will it become a habit? This matters more with AI than almost anything else. Early usage often reflects surprise, play, or curiosity. OLI tells you whether the behaviour survives once the system becomes familiar, imperfect, and occasionally wrong.

Put simply OLI measures test repeat rate, stickiness and engagement.

Strong decay often means behaviour snaps back to an old equilibrium. Stabilisation suggests compatibility with existing habits. Growth suggests the set point is shifting.

Directional economics asks whether there is any plausible shape in which this could work and what are the directional unit economics for ‘its’ success.

The decision rule

When ILI is weak in your own data, you kill it and move on.

When ILI is strong, OLI stabilises or improves, and the directional economics look sane, you earn the right to prototype.

When ILI is strong and OLI collapses, you change the ‘it’: the audience, the promise, the level of autonomy, or the role the AI plays.

This rule matters more when iteration is cheap and persuasion is easy.

Failure is the monster you want to meet early

Failure is a monster that lives underground. Its job is to eat bad ‘its’. If you do not feed it, it waits. If you feed it something small, it eats that. If you feed it something big, it eats that too.

AI does not tame the monster. It just lets you build bigger bait faster. Pretotyping is how you keep the bait small.

Pretotyping techniques

Each pretotyping technique is a different kind of bait. Each is designed to tempt out a specific failure, not to prove that the idea is good. These techniques are just a different way of generating your own data, early enough that it still counts.

Fake Door

Fake Door pretotypes test: is anyone interested enough to try to learn more?

Mechanically, you put the offer in front of real users as a button, link, or call to action. When someone clicks, the next step is honest: “coming soon”, “join the waitlist”, “request access”.

The failure monster you are tempting out is lack of demand. People say they like the idea, but when given a chance to act, they do nothing.

The sign that matters is a deliberate attempt to proceed, in a context where ignoring you was easy.

Notion AI (2022) – early waitlist prompt inside the product.

Before rolling Notion AI out broadly, Notion placed a simple “Try Notion AI” entry point inside the existing app that led to a waitlist. The feature was not available yet. The test was whether users would actively try to access AI assistance inside their workflow, not whether they said they wanted AI in surveys.

What makes this a Fake Door is that the behaviour happened before delivery. The click itself was the learning.

Pinocchio

Pinocchio pretotypes test belief.

They ask whether people believe the thing exists strongly enough to behave as if it does.

Mechanically, you create a lifeless representation that implies functionality: a video, mock interface, scripted flow, or walkthrough. The system cannot actually deliver the outcome. You observe whether users mentally adopt it anyway.

The failure monster you are tempting out is weak belief. People find the idea interesting, even if they know it is not yet real.

The sign that matters here is users asking real questions, imagining use, or planning behaviour around something that does not yet work.

Dropbox’s explainer video.

Before Dropbox built syncing infrastructure, they released a short demo video showing files syncing effortlessly across devices. Users signed up in large numbers and shared the video. The product was not functional. The behaviour showed belief strong enough to change expectations and intent.

This remains relevant because the technique is about belief, not technology. AI makes Pinocchio easier to do, and more dangerous to misread.

Mechanical Turk

Mechanical Turk pretotypes test outcome value.

They ask whether the result itself matters enough, even when delivery is manual, slow, and visible.

Mechanically, humans openly deliver the outcome. There is no illusion of automation. The experience may be clumsy. That is the point.

The failure monster you are tempting out is that the outcome does not matter enough to earn repeat behaviour.

The sign that matters is people tolerating imperfect delivery and still coming back.

Scale AI’s early data-labeling service.

Before building large-scale automation, Scale relied heavily on human labelers delivering training data to customers. Clients were buying outcomes, not systems. The test was whether clean labeled data mattered enough to justify cost and friction before automation improved margins.

This fits Mechanical Turk because value was tested before efficiency.

Wizard of Oz

Wizard of Oz pretotypes test experience fit.

They ask whether the experience works when it feels real, even if the system behind it is not yet autonomous.

Mechanically, you create the illusion of a complete system while humans secretly operate or assist it. From the user’s perspective, the system appears to work end-to-end.

The failure monster you are tempting out is experience breakdown over time: trust erosion, friction, confusion, or abandonment.

The sign that matters is repeat use under the belief that the system is autonomous.

Amazon Go early cashierless stores.

In the early rollout, the stores delivered the “just walk out” experience, but humans assisted and corrected edge cases behind the scenes. Customers behaved as if the system was fully automated. Amazon observed whether people trusted the experience enough to shop normally and return.

This was a Wizard of Oz because the experience was tested before full autonomy.

Provincial

Provincial pretotypes test real-world friction.

They ask whether the idea survives in one specific, constrained environment.

Mechanically, you launch the real thing, but only in a deliberately small context: one city, one customer segment, one use case.

The failure monster you are tempting out is the real world killing the idea.

The sign that matters is an ‘it’ that survives.

Monzo’s UK-only rollout before international expansion.

Monzo launched as a fully real bank, but only in one geography with a controlled user base. The provincial constraint forced the model to prove itself under real regulatory and behavioural conditions before scaling.

Re-label

Re-label pretotypes test meaning.

They ask whether the idea is right but the promise is wrong.

Mechanically, you change language, framing, or positioning without changing the underlying thing.

The failure monster you are tempting out is demand being driven by meaning rather than the performance.

The sign that matters is a behavioural shift caused purely by wording.

Slack reframing from “IRC replacement” to “where work happens”.

The product did not materially change. The framing did. Adoption broadened dramatically once the promise shifted from a technical tool to a work coordination space.

Pretend-to-Own

Pretend-to-Own pretotypes test commitment.

They ask whether your concept is strong enough to tempt your users to put some skin in the game..

Mechanically, users are asked to behave as if they already own or rely on the thing: deposits, reservations, paid pilots, data handover, calendar locks.

The failure monster you are tempting out is interest collapsing once something real is at stake.

The sign that matters is follow-through.

Tesla Model 3 reservations.

Customers placed refundable deposits years before delivery. The test was not attention. It was willingness to commit money early.

This separated excitement from seriousness at scale.

Stripped Tease MVP

Stripped Tease MVPs test repeat behaviour.

They ask whether the behaviour loops once everything else is removed.

Mechanically, you strip the experience down to the single behaviour that matters most.

The failure monster you are tempting out is one-time novelty.

The signal that matters is second and third use without prompting.

Instagram’s pivot from Burbn.

The team removed everything except photo capture, filters, and sharing. The stripped experience either created a loop or died. It lived.

This technique remains essential in tech and AI products that otherwise sprawl too early.

Kill, change, or build

Pretotyping has only three valid outcomes. Kill the ‘it’. Change the ‘it’. Or earn the right to build it properly.

Most ‘its’ should die here. Pretotyping works by creating xemes early enough to avoid big costs.

The process acknowledges that the failure monster can’t be killed, it can only be tricked. Feed it small meals early so it never gets the chance to eat the whole project.

Define the ‘it’ in one sentence. Choose the smallest bait that could expose the monster. Decide what action counts before you run it. Run it for a fixed number of real opportunities. Decide kill, change, or build.

Bibliography & Further Reading

The Xeme Framework draws on Alberto Savoia’s seminal work on pretotyping. You can learn more about his book The Right It here

You can also watch him explain the concept in his own words here

Check out some of my other Frameworks on the Fast Frameworks Substack:

May every sunset bring you peace!

Entity AI, swarms and the future of work (Asymmetric Podcast)

Fast Frameworks Podcast: Entity AI-Episode 8: Meaning, Mortality, and Machine Faith

Fast Frameworks Podcast: Entity AI - Episode 7: Living Inside the System

Fast Frameworks Podcast: Entity AI – Episode 5: The Self in the Age of Entity AI

Fast Frameworks Podcast: Entity AI – Episode 4: Risks, Rules & Revolutions

Fast Frameworks Podcast: Entity AI – Episode 3: The Builders and Their Blueprints

Fast Frameworks Podcast: Entity AI – Episode 2: The World of Entities

Fast Frameworks Podcast: Entity AI – Episode 1: The Age of Voices Has Begun

The Entity AI Framework [Part 1]

The Promotion Flywheel Framework

The Immortality Stack Framework

Frameworks for business growth

The AI implementation pyramid framework for business

A New Year Wish: eBook with consolidated Frameworks for Fulfilment

AI Giveaways Series Part 4: Meet Your AI Lawyer. Draft a contract in under a minute.

AI Giveaways Series Part 3: Create Sophisticated Presentations in Under 2 Minutes

AI Giveaways Series Part 2: Create Compelling Visuals from Text in 30 Seconds

AI Giveaways Series Part 1: Build a Website for Free in 90 Seconds

Business organisation frameworks

The delayed gratification framework for intelligent investing

The Fast Frameworks eBook+ Podcast: High-Impact Negotiation Frameworks Part 2-5

The Fast Frameworks eBook+ Podcast: High-Impact Negotiation Frameworks Part 1

Fast Frameworks: A.I. Tools - NotebookLM

The triple filter speech framework

High-Impact Negotiation Frameworks: 5/5 - pressure and unethical tactics

High-impact negotiation frameworks 4/5 - end-stage tactics

High-impact negotiation frameworks 3/5 - middle-stage tactics

High-impact negotiation frameworks 2/5 - early-stage tactics

High-impact negotiation frameworks 1/5 - Negotiating principles

Milestone 53 - reflections on completing 66% of the journey

The exponential growth framework

Fast Frameworks: A.I. Tools - Chatbots

Video: A.I. Frameworks by Aditya Sehgal

The job satisfaction framework

Fast Frameworks - A.I. Tools - Suno.AI

The Set Point Framework for Habit Change

The Plants Vs Buildings Framework

Spatial computing - a game changer with the Vision Pro

The ‘magic’ Framework for unfair advantage